I’m not a computer programmer, so when I decided to look into the question of which programming language autonomous vehicles use, I quickly realized how naive that question really was.

Autonomous cars use a complex combination of hardware and software to do what they do. As I researched a bit more, however, I did find that certain languages and software packages do come up quite often. In fact, several competing manufacturers even use some of the same underlying technologies.

As it seems is true with a lot of computer programming, knowledge of complex, object-oriented languages like C++ is an important skill. Then, there are languages that are considered much easier to use for various other softwares. Specifically, Python seems to come up a lot in the case of autonomous vehicles.

So what are some other key softwares or technologies that I should know about (in no particular order)?

The are literally hundreds of underlying softwares, libraries, and other technologies in the discussion about autonomous vehicles. Many of these are particular to one car manufacturer or are seemingly only important if you’re an artificial intelligence specialist trying to advance the field.

On the other hand, as a layman, there are a handful of terms that have come up often enough that I was compelled to look further into them — just to feel like I have a better understanding of the fundamentals. Having those all in one place as a primer could have saved me some time, so those are:

TensorFlow

TensorFlow is an open source machine learning platform that many autonomous car makers use. It was developed by Google, so it makes sense for it to be used at WayMo, but it’s applied much more widely than that.

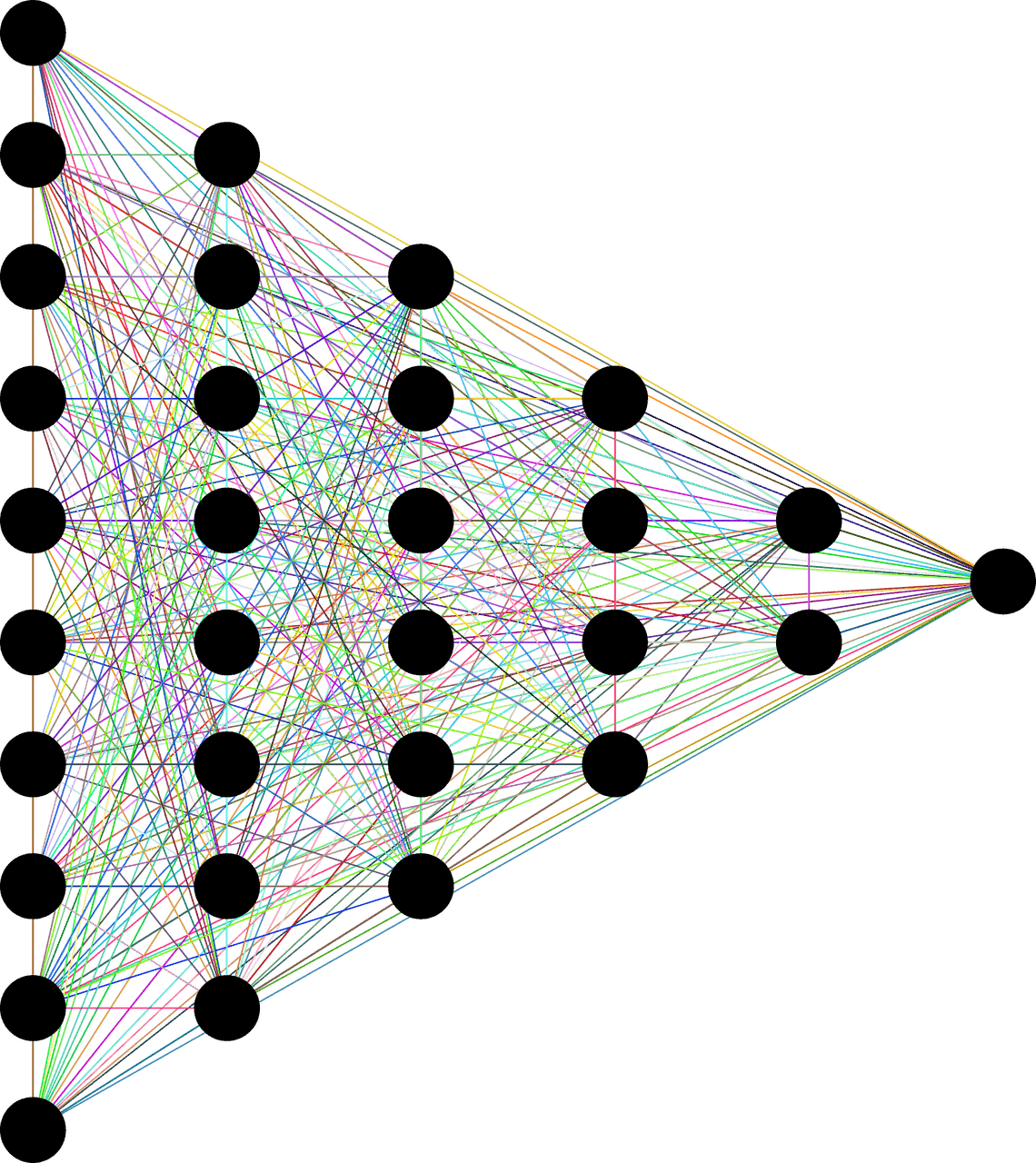

The platform applies deep learning through neural networks to feed in lots of data and make autonomous vehicles do what they do better.

What does that actually mean? As far as I can tell, scientists feed a whole bunch of information into networks of machines that analyze that data at an abstract level — so that the scientists themselves aren’t even totally sure what the networks are doing — and then come back with an answer. The scientists are able to test the responses to see that they work or are accurate, so the analysis is helpful — even though they don’t actually have access to how the analysis itself is working.

It seems totally crazy to me, but also seems to be true.

Mobileye

Mobileye is an Intel company that boasts on its website about having more than 25 automaker partners. They list “BMW, Audi, Volkswagen, Nissan, Ford, Honda, General Motors and more.” For that reason, you will hear about Mobileye quite a bit as you look into autonomous vehicles. The technology is used to allow cars’ cameras to sense and map the environment. This then leads to autonomous driving support technologies and abilities that we’ve discussed quite a bit: automatic braking, lane keeping assistance, etc.

Additional big impressive numbers include the fact that Mobileye is currently equipped in more than 40 million cars on the road today, and they’re working with 13 car manufacturers specifically on the problem of autonomous driving (whereas some of their partners use the technology to support what would be considered safety features, rather than autonomy.)

Cruise

Cruise is an autonomous driving company that was purchased by General Motors and now represents the technology underlying their vehicles.

Cruise’s website further highlights four parts of their technology. WebViz, which they use for efficient data visualization. Scene Edit, which allows for real-time feedback of 2D simulations. Cartographer, which they use to map the car’s environment, and Starfleet, which monitors and controls all of the vehicles that are on the road.

openpilot

Comma.ai uses openpilot (all lowercase with no space) to bring self-driving technology to Toyota, Honda, and other car makers. It’s another open source software that is used to accomplish many of the tasks that we associate with autonomous driving.

NVIDIA Drive

If you’re like me, when you hear “NVIDIA” you think about a graphics card in your personal computer, but the NVIDIA Drive platform is actually one of Mobileye’s stiffest competitors. The platform is an open combination of hardware and software that is used to perform many Advanced Driver Assistance Systems (ADAS) and is part of current development for fully autonomous vehicles.

Autonomous Vehicles and Deep Learning / Artificial Intelligence

Again, I come to the topic of autonomous vehicles as an interested layman, so none of the technologies that I’m mentioning here were known to me before I started researching.

I didn’t think I’d be looking into artificial intelligence, deep learning, and neural networks when I set out to learn more about self-driving cars, but I quickly found out that’s where so much of this technology points.

Essentially, since autonomous vehicles will need to operate at superhuman levels in order to be widely adopted, scientists are currently using networks of computers with superhuman abilities to both perceive and analyze the world.

The Goldilocks zone for me is conversations or media that mention the underlying technologies used in autonomous driving without getting overly “in the weeds” with technical jargon or elaborate algorithms.

A Great Resource

Once resource that I’ve found particularly useful and interesting as someone who doesn’t have a background in any of this stuff is the website and podcast that are run by M.I.T. professor Lex Fridman. Professor Fridman works in this field himself, but his interests are refreshingly wide-ranging. He interviews people who are leaders in their own (usually related) fields, and the resulting conversations draw useful connections between perspectives that don’t often intersect.

It’s refreshing to listen to a philosophical conversation about perception, for example, rather than a technical conversation about computer chips, which would be over my head.

I’d definitely recommend giving the podcast (or YouTube show) a listen (or a watch.)